(Examples) When To Use Consistent Hashing In An Interview

I remember when I was studying for my system design interviews almost half a year ago. I was studying system design for the first time with minimal prior real-world system design experience. When it came to the topic of consistent hashing, I was able to understand it on a basic level, however, I wasn’t able to identify relevant ways to actually use consistent hashing.

Other resources online would claim that they would show you how and when to use consistent hashing when in reality they spend 95% of the time describing how consistent hashing works instead of the use cases.

In this article, I will be solely focused on describing cases and situations when you would want to use consistent hashing in a real system or in a system design interview.

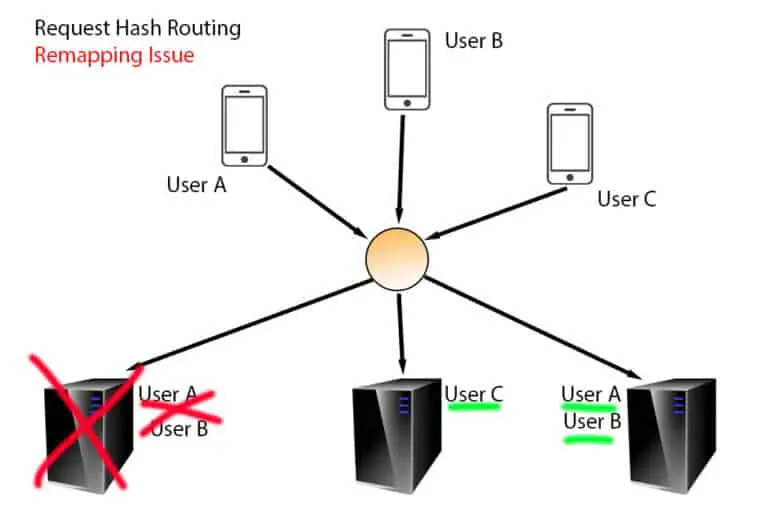

So in a nutshell, when should you use consistent hashing?

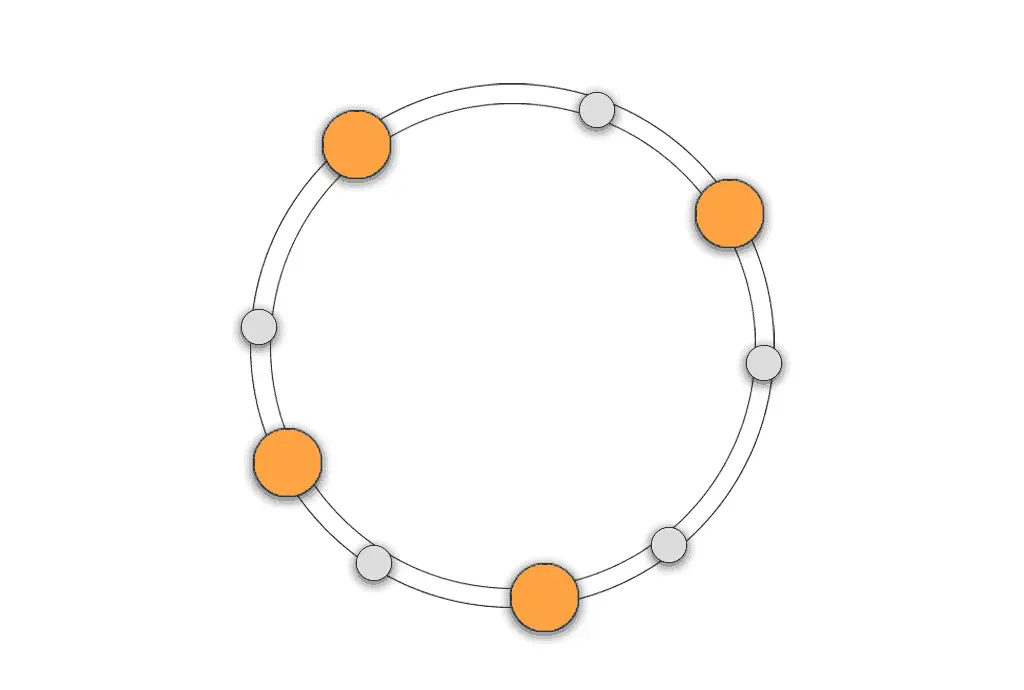

Consistent hashing should be used in a system design interview when you want to spread an amount of load evenly across a dynamic-sized set of targets. With consistent hashing, a change in the size of the targets will minimally affect the existing mapping of keys to target values.

Just because you can use consistent hashing, it doesn’t mean that you always should. The consistent hashing algorithm isn’t free and requires overhead, resources, and compute to maintain. It’ll be relatively slower than other key-to-target mapping functions like round-robin and simple-failover.

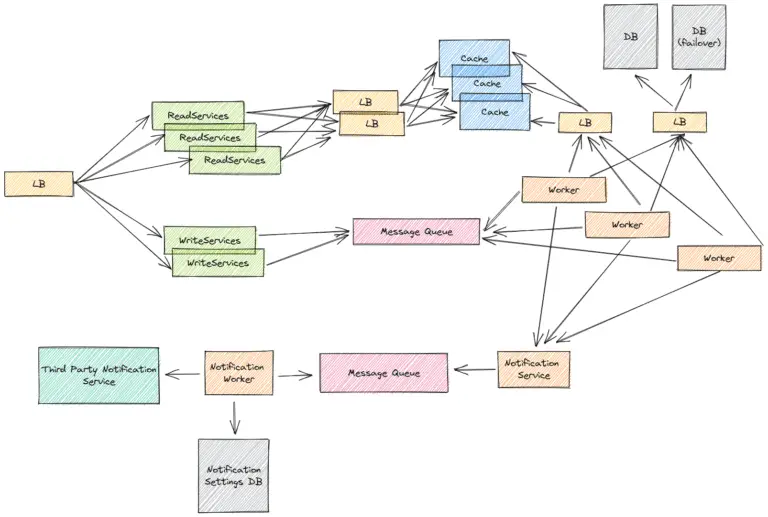

Example 1 – Distributing Load Across Caches

A common component of distributed system design is a cache. A cache, in most cases, utilizes memory instead of disk to read from and write to which reduces latencies by significant orders of magnitude.

There are different ways of creating caches which have different pros and cons. Caches can be on a server itself making the server stateful, or a cache can be external to all the servers making the servers stateless.

In the case of having stateful servers that cache data, which is an optimization for simplicity and low latency compared to having stateless servers that access caches, you’ll want to route requests to the same server where the request’s cached data already exists.

If a server (and an associated cache) is added or removed, you’d want the request-to-cache mappings to be as minimally affected as possible to reduce the number of cache misses. The consistent hashing algorithm helps in this case by having k/n (on average) remappings where k is the number of keys and n is the number of servers.

If you were just modding the keys for server values (ie taking the request hash and modding it by the number of available servers), you’d have k average reorderings. k/n is going to be less than or equal to k.

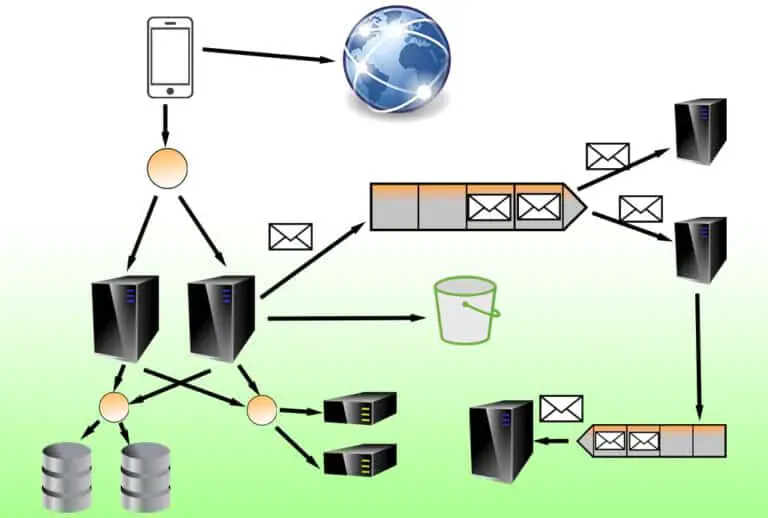

Example 2 – Distributing Load Evenly Across Servers

It’s important that you understand the concept and usage of virtual nodes within a consistent hashing algorithm and how they play a role in the probabilistic even distribution of requests to servers. Adding virtual nodes for a server will increase the odds of a server having traffic routed to it, and removing virtual nodes for a server will decrease the odds of a server having traffic routed to it.

The consistent hashing algorithm is great if you have stateless web servers. Because the consistent hashing algorithm uses virtual nodes to increase the probability of having an even distribution of requests-to-servers, the virtual node ratios between each other can be dynamically adjusted to tune distribution even further.

This means that if you add a server, you can temporarily add more virtual nodes for the server while it doesn’t have as much traffic as the prior servers. In the case you’re vertically scaling your servers, you might want to do this for the new servers that are being added as well because they can support more traffic than the rest.

If you’re trying to do something like A/B testing in a simple way, you can also change the number of virtual node ratios between your servers to allow more or fewer requests to be routed. That said, A/B testing is usually done through other mechanisms like using load balancers for distributing the request loads. The solution that’s right for you will depend on your requirements and use cases.

Using consistent hashing can help you in a lot of use cases, especially during a system design interview. Some of these things may be worth discussing because they’ll help express your depth of knowledge and thought, even if you aren’t planning on actually implementing your design with it.

Example 3 – Distributing Load Across Databases

In a distributed system at scale, you’re usually going to need more than one database to store all your data. For instance, Google can’t store the billions of search queries a day that accumulate over decades in a single database. As a result, you’ll want to maintain database clusters.

A database cluster is a collection of two or more databases that are controlled by a database server, and consistent hashing can be used to distribute load across a database cluster.

Depending on your system design interview, this might be worth discussing if the focus of a discussion is on creating a highly available and highly durable database service. Cassandra is a popular NoSQL database choice that uses consistent hashing to manage data going into its database clusters.

Consistent hashing within the context of database clusters allows the database server to know where a database record should be placed or should exist within the database cluster. Consistent hashing also allows you to tune the number of records to bias towards one database cluster node or another if certain nodes can handle more transactions than others.

If replication is enabled, that data is then duplicated to nearby cluster nodes for high availability and durability. The database cluster replication process can be synchronous or asynchronous.

With synchronous replication, you get higher data durability because you know that the transaction will only be marked as successfully complete if it’s able to be confirmed as existing across multiple nodes. This is at the expense of having higher latencies for database writes because of how much longer synchronous calls can take compared to asynchronous calls (which may not be complete).

With asynchronous replication, you get higher data availability and lower-latency writes because you don’t need to wait for the writes to complete on the target replication nodes. This is at the expense of having lower data durability because the replication writes could fail, and if the original target node is unavailable or has its data center destroyed, the data will be unrecoverable.