When To Use Load Balancers (System Design Interview)

Load balancers aren’t a difficult concept to understand, yet they are very important to understand when it comes to the system design interview. If you don’t understand the basics of when and why to use load balancers, you’re going to be unable to develop large and scalable distributed systems.

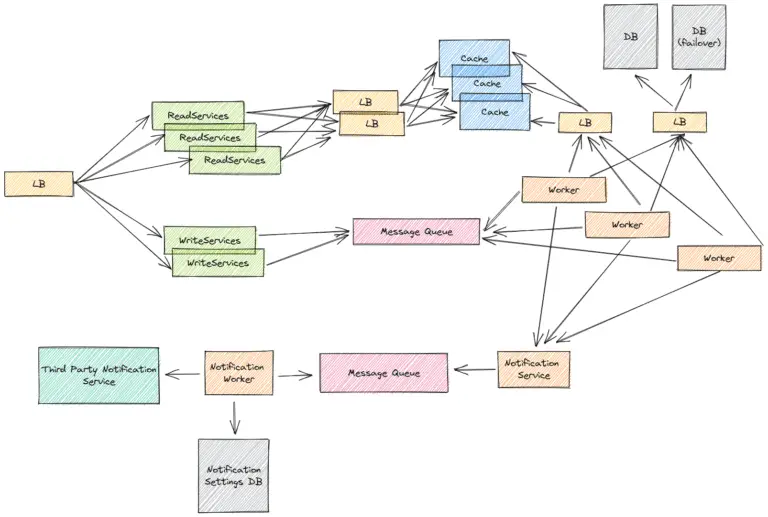

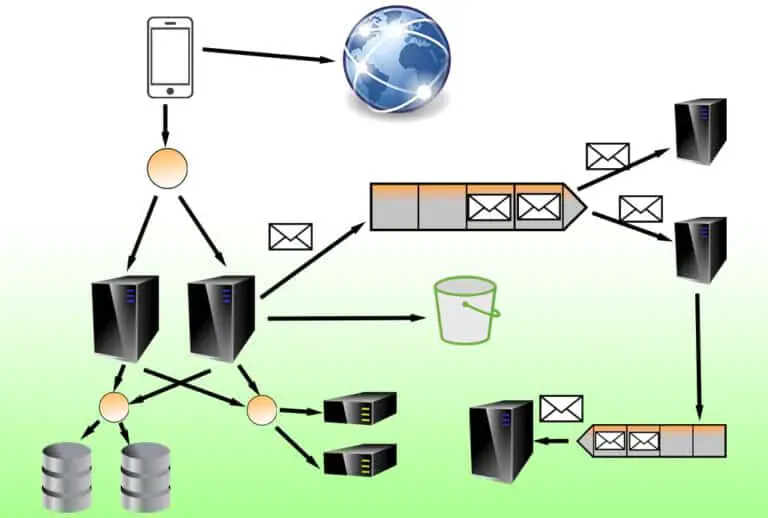

Load balancers act as reverse proxies which sit in front of related services or servers and direct traffic to them according to the load balancer’s routing policy.

A load balancer should be used when you want to resiliently distribute tasks or requests within a system. They’re used because servers that handle requests and tasks have limited resources in terms of computational power and memory. Utilizing load balancers enables you to prevent single points of failure (SPOFs) and scale the amount of load your system can handle horizontally (theoretically to infinity).

While load balancers can be used in many cases, they can’t be used in all cases. For example, you can’t use a load balancer when distributing load to certain third-party services via APIs. In this case, you’re going to have to trust the underlying implementation of the API to be able to handle the load of your use case.

Usually, you’ll want to use load balancers to distribute load across stateless servers or database cluster instances. You can also use load balancers to distribute load across different services that are geographically distributed from each other for higher resiliency.

In this article, I will be going over how to identify when to use load balancers and why you should use load balancers. I will also be covering the common areas where load balancers should be used within a distributed system.

Why Should You Use a Load Balancer?

If you’re a company like Google that processes millions of requests per second for Google Search, all of that data can be processed by a single machine (no matter how powerful the machine is).

Even if a machine could handle all the Google Search requests, if that machine went offline for whatever reason like a power disconnection, an internet outage, a hardware failure, or a natural disaster, then no more Google Search traffic could be served.

Designing distributed systems that are used in the real world is different from designing solutions to coding problems. When you’re designing systems, you have to take into account the fact that failure within your system is inevitable, for each of your system components.

You should use a load balancer as a way of mitigating failure within a system because it can detect and redirect traffic to healthy service services. If your system is getting overloaded, you can add additional healthy services that the load balancer can have access to too and further distribute the load.

Something that was difficult for me to grasp initially when I was learning system design was what to do about the single point of failure of a load balancer. If a load balancer is used to distribute load across multiple services or servers, isn’t the single load balancer a single point of failure itself now?

To make your system truly resilient, you should be utilizing at least two or more load balancers that have the same settings and target services. In this case, when a service is trying to communicate with a load balancer to distribute load and that load balancer is unavailable, a different healthy load balancer can always be accessed.

TIP: You might not have to actually draw out the redundancies within the context of a system design interview if you’re able to communicate with your interviewer what you’re trying to do. This will allow you to save time to talk about other things if your interviewer is fine with it and understands you.

Should You Use a Load Balancer or API Gateway?

Something that used to confuse me when I was starting out with system design preparation was whether I should use a load balancer or API Gateway.

If you look on YouTube for sample system design practice, you’ll see that sometimes people will use API Gateways or load balancers to route traffic to their application. I can explain this in such an easy-to-understand way now.

A load balancer is different from an API Gateway because it distributes traffic across instances of the same microservice. An API Gateway distributes traffic across multiple microservices by acting as a central service for ingesting requests which can be further transformed by the backend (i.e. user can send HTTP requests which are converted to gRPC on the backend).

API Gateways also provide the user with various features that load balancers don’t necessarily provide out of the box. With an API Gateway, you can do things like throttle users, validate requests and responses, handle the caching of responses, and more.

Typically, you’d want to use an API Gateway in conjunction with load balancer targets for your microservices. The API Gateway would do a lot of the API verification and throttling (basically overhead like authentication which the microservices don’t have to worry about now), and if the request was good it would be passed into backend microservices which have a load balancer sitting in front of them.

Basically, if you’re in a system design interview and are required to develop a small feature, it might be preferable to omit an API Gateway and just use load balancers for your service. If you’re developing a larger system in a system design interview that spans multiple microservices, then you might want to consider using an API Gateway in conjunction with load balancers.

What Are Load Balancer Routing Policies (Pros and Cons)

Load balancer routing policies define how a load balancer will distribute load across its target instances.

There are a lot of load balancing policies, and these policies can be combined with each other to provide custom routing to target instances. However, in this article, I will only be covering some of the most simple and practical load balancer routing policies that may apply to a system design interview.

Round Robin

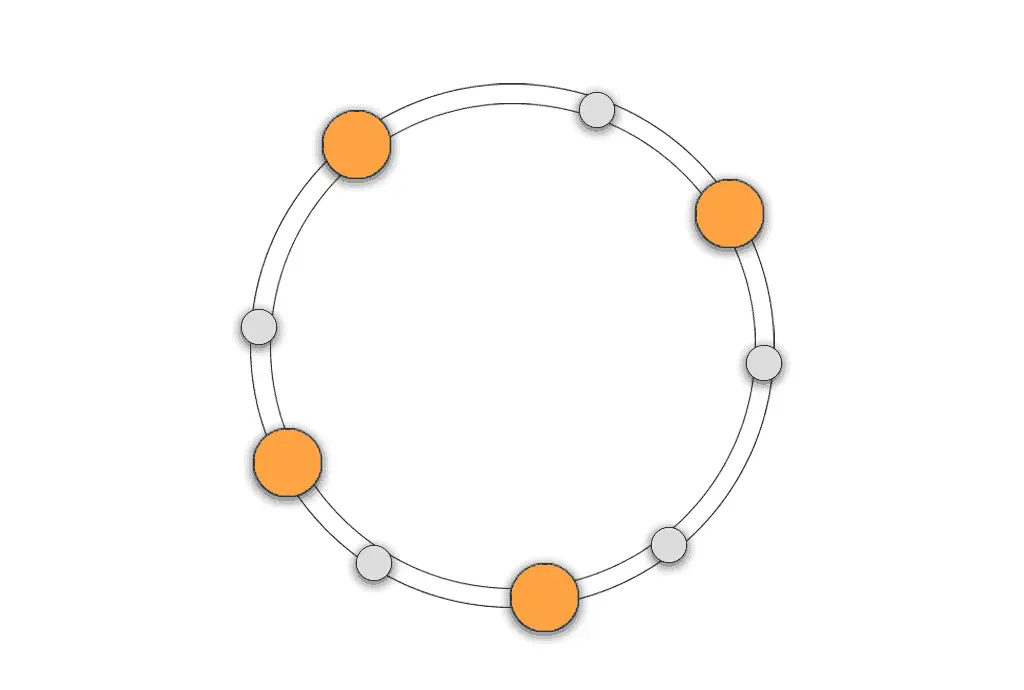

Round-robin routing essentially rotates (in a circular manner) the assignment of requests throughout all the available servers. This is probably one of the simplest routing policies which work well for requests that are fast to process and servers that have equal resources.

Request Hashing

Request hashing takes some collection of attributes or a single attribute from a request and hashes the value to find a particular server that the request belongs to. Many times, this is done by the IP address.

You could run into a hotspot problem when the traffic isn’t evenly distributed throughout all the targets of a load balancer because the hashing key isn’t good. Also, if your application’s use case requires that a request is always sent to the same target (even in the event of adding/removing servers), you’ll want to consider something like a consistent hashing algorithm instead.

Along with request hashing, if you’re able to obtain the user’s IP, you can do geography-related hashing or routing which could be beneficial for a user in terms of latency. If a user is from France, you could have a geo-routing policy to route the user to EU or France-specific servers. The same could be done with US servers and users from the United States.

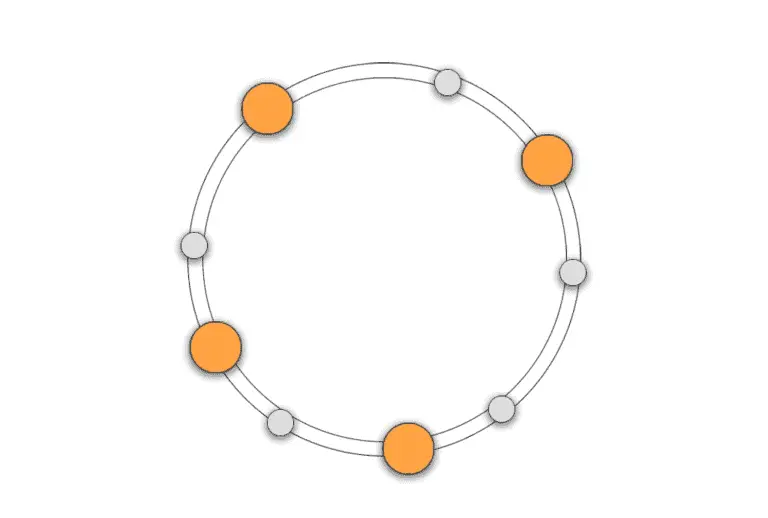

Consistent Hashing

Consistent hashing routing is a tunable way to distribute load across multiple target servers. A key feature of consistent hashing is the ability to only need to shuffle k/n request-to-server mappings whenever a new server is added or removed. This is significantly better than having to remap potentially all the request-to-server mappings like in request hashing.

Least-connections / Least-bandwidth

As the names imply, least-connections and least-bandwidth routing policies route a request to the server with either the least amount of active connections or the least amount of bandwidth used.

The greater point to understand with these types of routing policies is that the routing can be based on any characteristic of a particular server. The routing policy could be related to things like least-QPS over a rolling-window time period or least-memory used.